Single Instance, Our ETL system was running on a single EC2 node and we are vertically scaling as and when the need arose.The following are the 3 critical reasons for undergoing a technology transfer, Technology Selection: Kettle vs AWS Glue vs Airflow Deep down in my heart I know if not now, the next customer deployment - i.e larger than the current one is designed to fail. Yet our system rand successfully to cater the needs of my ever-demanding customers. If he has no supporting DevOps and infrastructure team around, that 16 hours shoot north to 18 hours or may be more. Those issues are sufficient for a caring data engineer to keep himself awake for almost 16 hours a day. Stored procedures are the de-facto choice when it comes to data munging, Pls get rid of them.Further, the legacy systems make it almost impossible for the IT team to even simplify the periodical backup(data) process.Delivering reports and analytics from OLTP databases is common even among the large corporations - Significant numbers of companies fail to deploy a Hadoop system or OLTP to OLAP scheme despite having huge funds because of the issues #1 and #2.Assuming software engineers can solve everything and there is no need for a data engineering speciality among the workforce.Low or no funding to invest in tools(and right people) to make life easy for every stakeholder including the paying customer, e.g ETLs, Data Experts, etc.Creating a wow factor is the primary, secondary, and tertiary concern to acquiring new labels, and seldom focusing on the nuances of technology to achieve excellence in delivery Small companies aspire for acquiring major enterprises in their customer list often trivialize the technology problem and treat them as people issues because 0. Those ETL tools came with a conditional free version where the best-of-the-class attorneys fail to understand the conditions. Last but not least, licensing them is almost always obscure.Reusability is practically impossible where we have to make copies for every new deployment and.They usually had connectors that made things easy but not extensible for a custom logic or complex algorithms.Workflow management tools that are popularly known as ETLs are usually graphical tools where the data engineer drags and drops actions and tasks in a closed environment.The major problems I encountered are the following, It worked but not without problems, we had a rough journey, we paid hefty prices in the process but eventually succeeded.

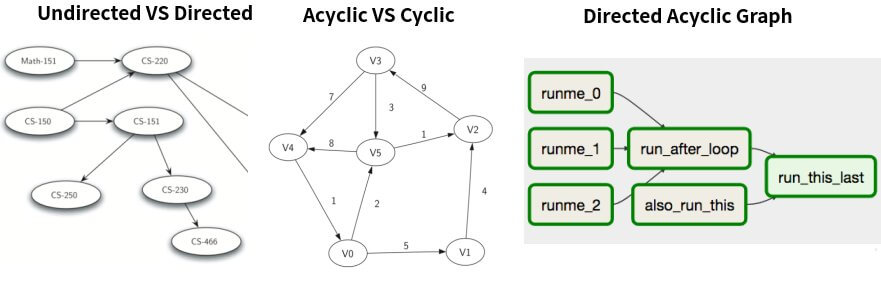

i.e The most voluminous data transfer was around 25-30 million records at the frequency of 30 minutes with a promise of 100% data integrity for an F500 company. When I say large scale, I meant significantly large but not of the size of Social Media platforms' order. IntroductionsĪ robust data engineering framework cannot be deployed without using a sophisticated workflow management tool, I was using Pentaho Kettle extensively for large-scale deployments for a significant period of my career. In the second section, we shall study the 10 different branching strategies that Airflow provides to build complex data pipelines. The objective of this post is to explore a few obvious challenges of designing and deploying data engineering pipelines with a specific focus on trigger rules of Apache Airflow 2.0. I thank Marc Lamberti for his guide to Apache Airflow, this post is just an attempt to complete what he had started in his blog. Image Credit: ETL Pipeline with Airflow, Spark, s3, MongoDB and Amazon Redshift.Source code for all the dags explained in this post can be found in this repo This post falls under a new topic Data Engineering(at scale). In this post, we shall explore the challenges involved in managing data, people issues, conventional approaches that can be improved without much effort and a focus on Trigger rules of Apache Airflow. Building in-house data-pipelines, using Pentaho Kettle at enterprise scale to enjoying the flexibility of Apache Airflow is one of the most significant parts of my data journey. To understand the value of an integration platform or a workflow management system - one should strive for excellence in maintaining and serving reliable data at large scale. I argued that those data pipeline processes can easily built in-house rather than depending on an external product. I was so ignorant and questioned, 'why would someone pay so much for a piece of code that connects systems and schedules events'. Airflow Trigger Rules for Building Complex Data Pipelines Explained, and My Initial Days of Airflow Selection and Experienceĭell acquiring Boomi(circa 2010) was a big topic of discussion among my peers then, I was just start shifting my career from developing system software, device driver development to building distributed IT products at enterprise scale.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed